Projects

On-going Projects

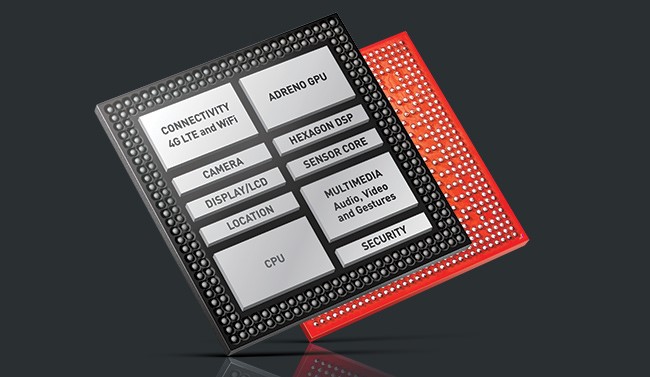

초저지연 온디바이스 AI SoC에서의 CPU-NPU 간 성능 간섭 완화를 위한 간섭 인지형 마이크로아키텍처 및 SoC 자원 관리 연구 — Interference-Aware Microarchitecture and SoC-Level Resource Management for Mitigating CPU-NPU Contention in Ultra-Low-Latency On-Device AI SoCs

Project Period: 2026.03.01 — 2030.02.28

Funding Agency: 한국연구재단 (NRF)

Program: 개인연구—신진연구 (유형B)

Description

This project develops CPU microarchitecture and SoC-level resource management techniques to mitigate contention between CPUs and NPUs over shared on-chip resources in on-device AI systems. The research aims to enable predictable and ultra-low-latency AI inference on heterogeneous AI SoCs.

반도체 핵심 IP 설계 전문 인력 양성 (고성능 프로세서) — Training Program for Semiconductor Core IP Design Specialists (High-Performance Processors)

Project Period: 2026.03.01 — 2031.02.28

Funding Agency: 한국산업기술진흥원 (KIAT)

Program: 산업혁신인재성장지원

Description

This project aims to systematically cultivate industry-ready professionals capable of designing core IP for high-performance processors and AI semiconductors through an industry-driven curriculum integrated with collaborative academia–industry projects.

AI 반도체 혁신 연구소 (연세대학교) — AI Semiconductor Innovation Lab (Yonsei University)

Project Period: 2025.07.01 — 2030.12.31

Funding Agency: 정보통신기획평가원 (IITP)

Program: 산학연계 AI반도체 선도기술 인재양성

Description

This project establishes the Chips and AI Innovation Center (CINC) at Yonsei University to foster globally competitive researchers in AI semiconductor technologies. The program supports advanced education and collaborative research on next-generation AI accelerators and semiconductor system architectures.

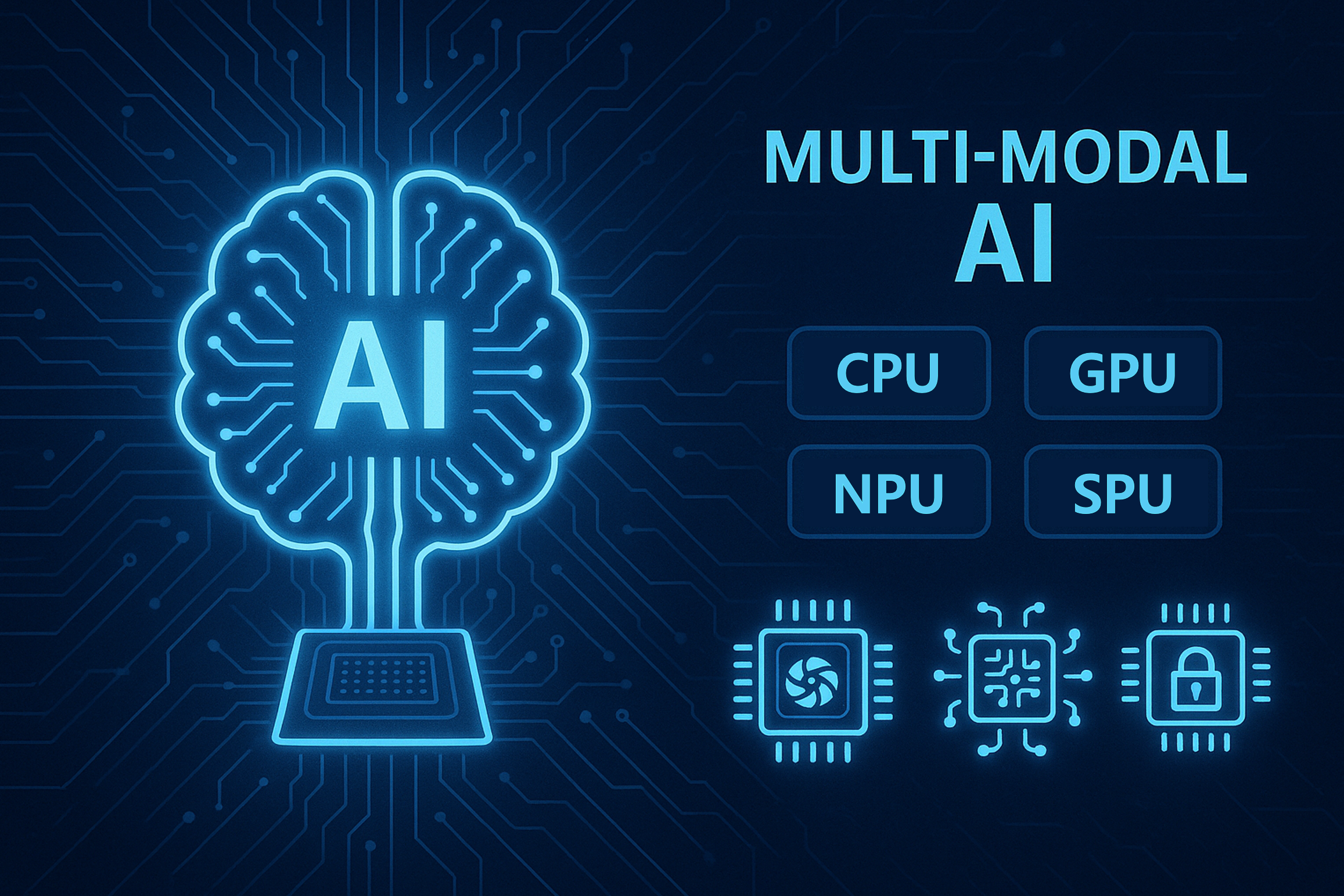

에너지 효율적 범용 멀티 모달 AI를 위한 복합 연산 가속기 기초 연구실 — Basic Research Laboratory for Energy-Efficient, General-Purpose Multi-Modal AI with Heterogeneous Computing Accelerators

Project Period: 2025.06.01 — 2028.05.31

Funding Agency: 한국연구재단 (NRF)

Program: 집단연구—기초연구실

Description

This project develops a heterogeneous acceleration platform for energy-efficient, general-purpose multi-modal AI systems. By integrating diverse computing accelerators and system-level optimizations, the project aims to enable efficient processing of multi-modal AI workloads.

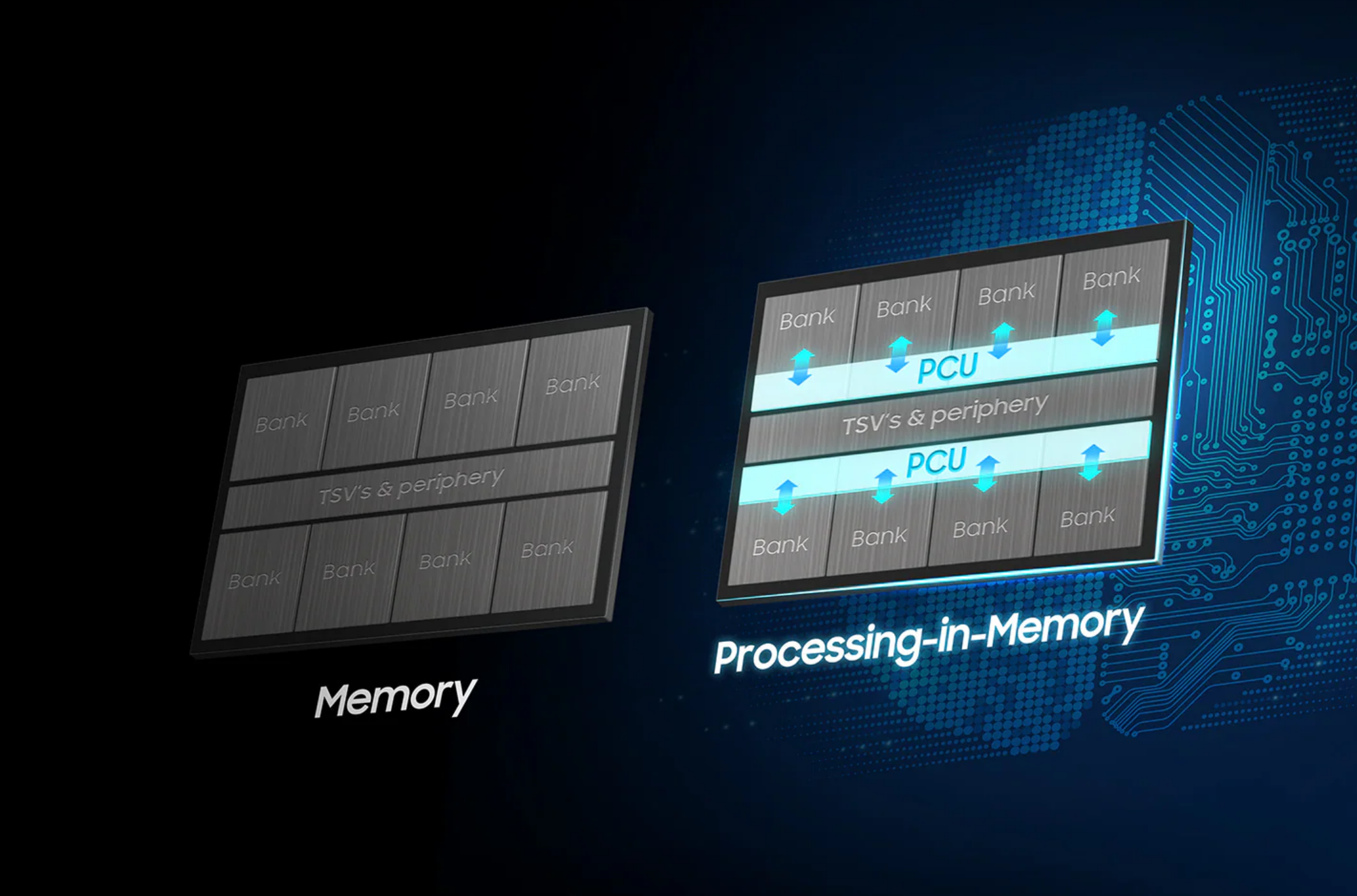

LLM 가속을 위한 CXL 기반 PNM 아키텍처 및 시뮬레이션 플랫폼 개발 — Development of CXL-based PNM Architecture and Simulation Platform for LLM Acceleration

Project Period: 2024.04.01 — 2026.12.31

Funding Agency: 한국산업기술기획평가원 (KEIT)

Program: 민관공동투자반도체고급인력양성

Description

This project develops a CXL-based processing-near-memory (PNM) architecture and simulation platform to accelerate large language model (LLM) workloads. The research focuses on enabling efficient memory-centric acceleration by leveraging CXL-enabled memory expansion and near-memory computing techniques.

Past Projects

데이터 플로우 구조 기반 PIM의 실행 및 프로그래밍 모델 개발 — Development of PIM Software Architecture based on Data-Flow Computing

Project Period: 2024.04.01 — 2025.12.31

Funding Agency: 정보통신기획평가원 (IITP)

Program: PIM인공지능반도체핵심기술개발

Description

This project develops core computing architectures and software technologies for PIM-based main memory and heterogeneous accelerator platforms, including dataflow-driven programming and execution models, compilers, development tools, operating systems (drivers, memory management, scheduling), runtimes, and application-specific libraries for AI and diverse workloads.

Past Projects (Before Joining Yonsei SSE)

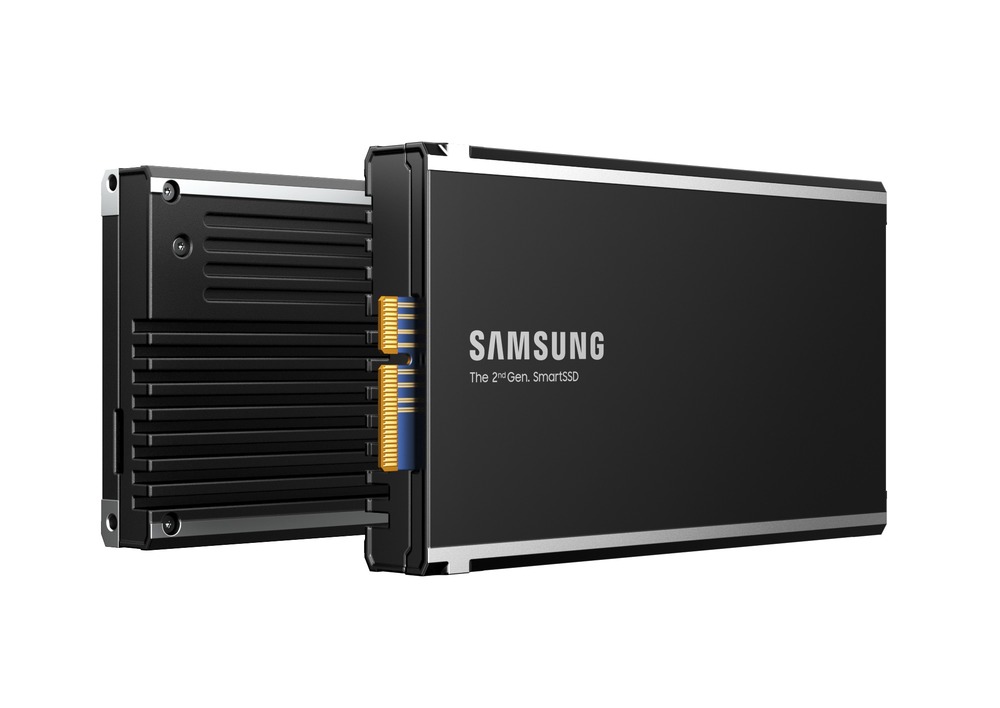

SmartSSD 2.0: Developing Next-Generation Computational Storage Drive

(2020.09 - 2021.08)

- Research and development project at Samsung Electronics

- Designing an SoC (System-on-Chip) for next-generation CSDs (Computational Storage Drives)

- A prototype was announced at Flash Memory Summit (FMS) 2022

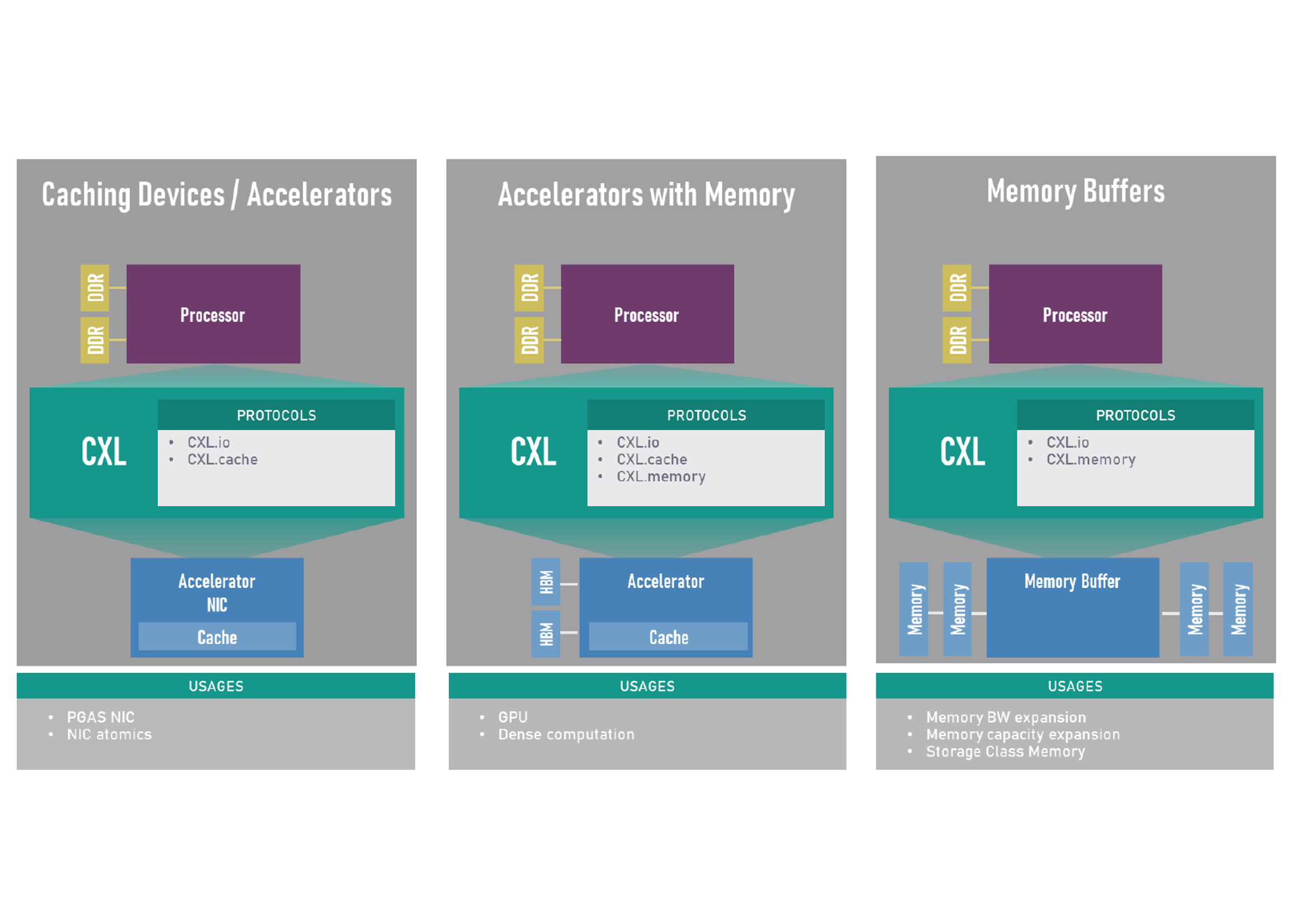

Developing CXL-Based Accelerator and Memory Expansion Device

(2020.03 - 2020.08)

- Research and development project at Samsung Electronics

- Developing CXL (Compute eXpress Link) Type 2 accelerator and Type 3 memory expansion device by leveraging NAND flash

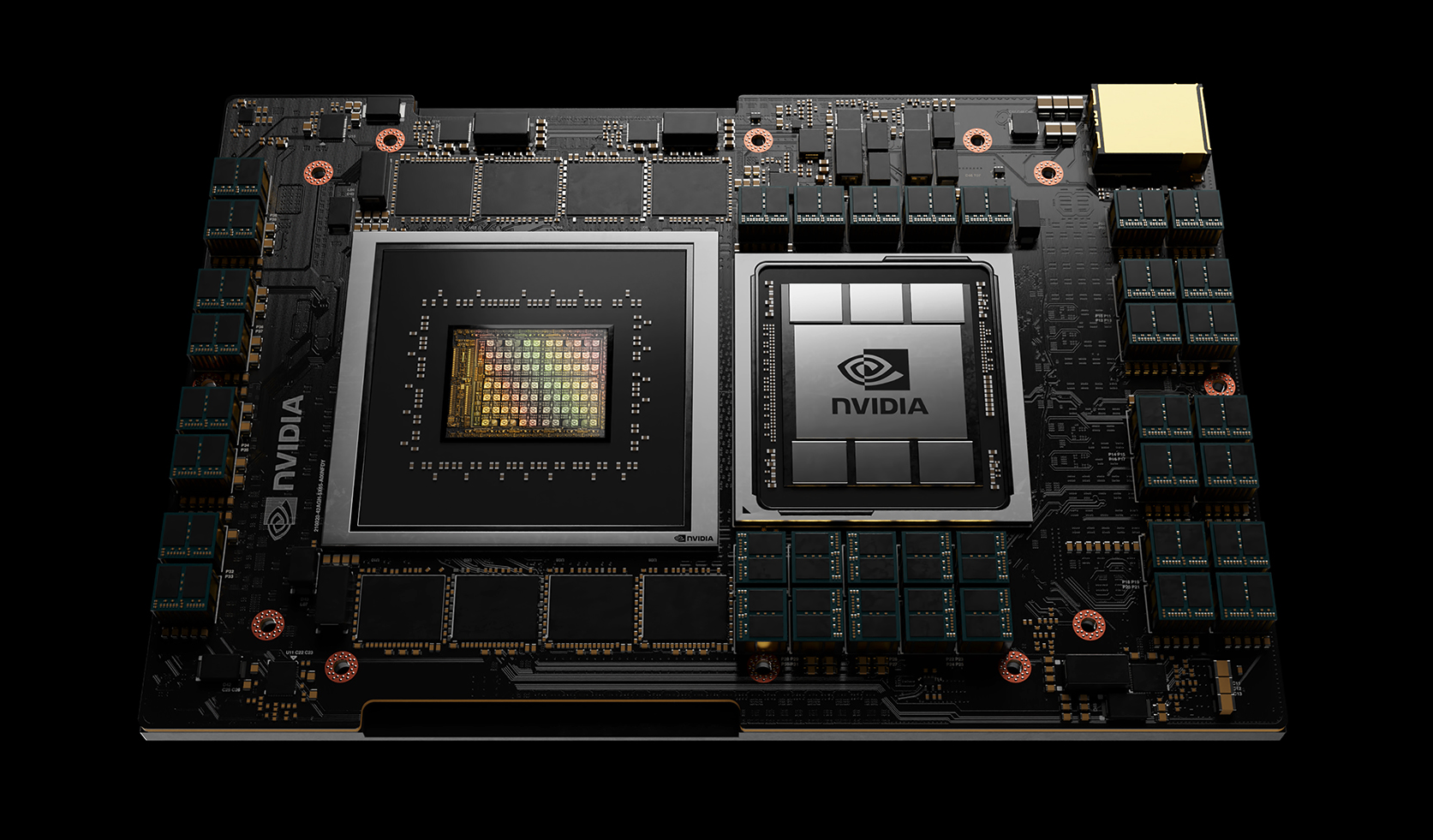

Developing CPU-GPU Heterogeneous Computing Simulation Framework

(2020.03 - 2020.08)

- Research and development project at Samsung Electronics

- Developing CXL (Compute eXpress Link) Type 2 accelerator and Type 3 memory expansion device by leveraging NAND flash

Developing Energy-Efficient Approximate Memory for Neural Network Applications

(2018.07 - 2019.06)

- Research project at Yonsei University joint with SK Hynix

- Exploring an energy-efficient approximate memory architecture for deep learning applications

Developing Processor and Memory System for Next-Generation Security Platform

(2017.09 - 2018.08)

- Research project at Yonsei University joint with Samsung Electronics

- Developing ASIPs (Application-Specific Instruction-Set Processors) for cryptographic algorithms (e.g., AES, SHA-256, and RSA-2048)